With Siri, Apple has moved the on-device voice recognition engine, called Voice Control,

firmly to the cloud. This means that the universal ability Apple used to have, whether you had data connectivity or not, to call friends and family by talking to the phone, is no longer there.

Is this a bad regression?

On the face of it, this is a tremendous regression – you now have to ‘pay’ to use a capability that was previously ‘free’ – either through the use of data minutes, or through a WiFi connection of sorts.

In addition, even with fast 3G connections, the latency to call someone in the addressbook is very noticeable now, with a round-trip conversation with a cloud-hosted Siri required, before a simple call can be made.

Or a masterstroke to get a free ‘Gold Set’ data?

This might actually be an incredible masterstroke.

First, as it turns out, Voice Control was used to call up people – something you can’t do if you’re not connected at all. And, if you are connected to the network, it’s increasingly likely that you have data availability too. This is why, for the vast majority of people, there will be no noticeable degradation in functionality or quality of experience.

But just see what Apple gets for free! By moving even voice calling to the cloud, Apple gets their hands on valuable data to train its voice recognition engine – what we in the industry would call a ‘Gold Set’.

Here’s why. Let’s say you ask Siri, in your native accent, ‘Call John Appleseed’. Siri tries hard, and makes a reasonable guess that you did, indeed, mean to call John. The call gets connected, and you have a nice fictitious conversation with Mr Appleseed.

Now, Siri knows with certainty that the sound sample you uttered did represent the phrase ‘Call John Appleseed’. This can be used to train a model for your own voice, or generalize it to all English speakers, or even create a model for ‘People living in US speaking with a South Asian accent’.

Blue-underlined words are your friend

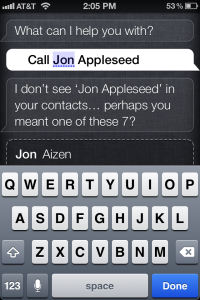

Siri underlines words that it has trouble understanding in blue (see image above). Click on them to choose an alternate word – I won’t be surprised if this correction is used by Apple to refine an existing model, too.

S*** that Siri says – also valuable learning data

Each time a Siri conversation becomes a meme, and gets played out in countless iPhones 4S’ around the world, more Gold Set data gets created. In essence, Apple gets millions of voice samples, across countries all over the globe, saying the phrase ‘Siri, tell me a joke’. This data isn’t anonymous – it’s tied to the user who owns the phone, and thus serves to improve a potentially specific model, instead of it being a waste of Apple’s cloud resources.

In this remarkable sense, such interactions with Siri – calling people, and doing funny interactions with Siri, is a much better way of providing language training data to a voice recognition engine, instead of needing to recite a preordained passage from a book, as was the norm with early voice recognition software.

My voice data, and trusting Apple

An ex-Siri engineer remarked recently that Apple had the trust needed to build a compelling product (not just technology) like Siri:

For Siri to be really effective, it has to learn a great deal about the user. If it knows where you work, where you live and what kind of places you like to go, it can really start to tailor itself as it becomes an expert on you individually. This requires a great deal of trust in the institution collecting this data. Siri didn’t have this, but Apple has earned their street cred.

I have no doubt Apple is building advanced personal or clique language models using the data they’re getting from Siri usage, and I can’t wait for Siri to start understanding my supposedly hard to understand accent well!

Amit

A decade or so back we had Captain Kirk flip open his communicator and communicate with his team or starship. Today we can do exactly that with cellphones some clamshell models of which look very much like the communicator used by Kirk.

I read a sci fi novel by John Ringo recently where Galactics make contact and give humans a device called an AID. An Artificial intelligence based personal assistant with supercomputer capabilities and human emotions. SIRI seems to the seed that will make the AID a reality a decade or so down the line just the wireless communicator became reality.

But we never saw Captain Kirk struggle with charging problems 🙂 So we have to see where Siri falls short of the sci fi AID’s capabilities.

Couldn’t say any better. Good job Amit.

Cheers

Gurkirat